When four companies spend $600-700B in capital, the equivalent of a nation like Switzerland’s GDP, on infrastructure, they don’t just build data centers – they break global supply chains. In a world where advanced components are scarce and pricing curves have turned vertical, enterprises face a stark choice: cling to purist architectures built for yesterday’s component abundance, or design for flexibility in a market defined by constraint.

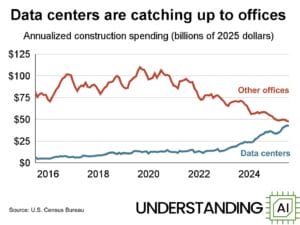

There is a quiet reordering of the global economy underway, and it is not happening in oil fields or shipping lanes. It is happening in semiconductor fabs, disk drive factories, and hyperscale data center construction sites.

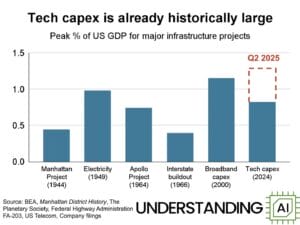

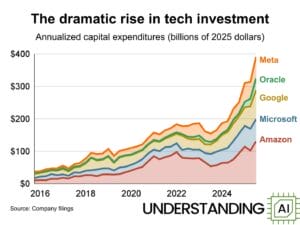

To understand what is unfolding, start with a simple comparison. The combined capital expenditures of Amazon, Microsoft, Google, and Oracle now approach levels comparable to the annual gross domestic product of Sweden. And unlike GDP, which ebbs and flows with economic cycles, hyperscaler CapEx is forecast to grow steadily through the end of the decade.

Source: Understanding AI, “16 Charts That Explain the AI Boom” (2025)

This is not abstract. It is reshaping the hardware market in real time.

Hyperscalers are absorbing, in some quarters, between 60 percent and 80 percent of global DRAM and NVMe production. When that much demand is concentrated in the hands of four buyers, supply chains do not adjust gently. They bend. Prices rise. Lead times stretch. Smaller buyers find themselves at the back of the line.

Enterprise customers are now experiencing something they have not seen since pandemic shortages: Orders placed in good faith are repriced weeks later. Six- to nine-month lead times are becoming routine. System vendors are doing their best to preserve margin neutrality while their component costs spike underneath them.

Six months ago, a 100-petabyte hybrid disk cluster in our portfolio cost approximately $4.8 million. A 100-petabyte hybrid flash architecture, combining TLC and QLC, cost approximately $10 million. At the time, it was intuitive to me that hybrid flash architectures would, within a few years, surpass disk in density and approach economic parity. The semiconductor roadmap and Moore’s Law pointed in that direction.

Then the AI boom hit.

Today, that same 100-petabyte hybrid disk cluster costs roughly $6 million, a 25 percent increase. The hybrid flash equivalent costs approximately $39 million, nearly four times its price six months ago. The economics did not gradually converge. They violently turned.

Why? Because semiconductor fabrication plants require years to build and ramp. HDD manufacturing, by contrast, has been historically scaled and optimized for hyperscale bulk storage for more than a decade. Hyperscalers have consumed hard drives at enormous volume for cloud object stores since the early 2010s. The HDD supply chain is mature, amortized, and predictable – and even it is facing significant pressure.

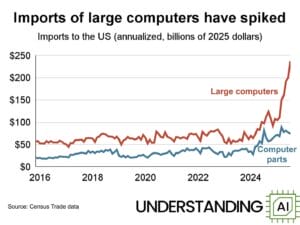

NVMe and advanced stacked flash, however, were not historically consumed in hyperscale quantities until AI model development in the cloud began demanding extraordinary density and throughput. The semiconductor ecosystem was caught flat-footed. The demand curve was vertical. The supply curve was fixed.

The result is a temporary, but powerful, asymmetry.

Will hyperscaler costs rise? Historically, no. The largest cloud providers operate under long-term procurement agreements, often locking in supply years in advance. They negotiate at scale no enterprise can match. In fact, rising on-premises component prices may strengthen their competitive position. As enterprise hardware becomes more expensive and unpredictable, the relative stability of cloud pricing becomes more attractive. While unlikely a coordinated motion, the second order effects are existential crises for neoClouds that do not have the procurement scale of a hyperscaler or even a large systems OEM and for vendors who have built contractual obligations around forward NVMe pricing and availability.

To the hyperscalers, this is a virtuous cycle. Their scale lowers their marginal cost of compute and storage. Their spending tightens supply elsewhere. That tightness makes their services more comparatively compelling.

To on-premises infrastructure vendors and enterprise IT departments, it can feel like a vicious one.

This is precisely the moment when architectural discipline matters more than ever.

The enterprise data market across edge, core, and cloud remains fragmented among legacy scale-up arrays, scale-out NAS appliances, point flash vendors, and cloud-locked file services. Many of these systems were built for a world of relatively stable component pricing and predictable refresh cycles. That world has changed.

In periods of volatility, flexibility is strategy.

At Qumulo, we designed our data platform around hardware optionality. We support dense hybrid disk, hybrid flash, and pure NVMe configurations. We run on standard x86 servers and in every major public cloud. That was not a marketing choice. It was a supply-chain hedge.

When flash pricing surges, customers can pivot to a dense HDD without sacrificing performance. When flash normalizes, they can rebalance toward higher performance tiers. With our Stratus architecture and Cloud Data Fabric, data can burst into the cloud for compute acceleration or leverage hyperscaler object stores as a cost-effective backing tier without re-platforming applications.

It is worth emphasizing a counterintuitive truth: the world’s largest cloud object stores are predominantly built on hard drives. The hyperscale object model has relied on disk economics for over a decade. The HDD ecosystem is not new. It is battle-tested and able so absorb hyper-scaling better than more nascent technologies.

The real dislocation today is in advanced flash.

Meanwhile, compression, object-based write optimization, and predictive read caching that consistently delivers 92 to 95 percent accuracy, enables hybrid disk architectures to achieve performance characteristics that rival or exceed competitors’ all-flash arrays for many enterprise workloads. When flash becomes scarce, intelligence becomes the substitute.

That is the central lesson.

In technology markets, we historically assume that physics determines cost curves. In reality, economics does. When a handful of companies command hundreds of billions in capital expenditure, they reshape not only their own infrastructure, but the pricing environment of everyone else.

The semiconductor industry will respond. New fabs will come online. Supply will normalize, perhaps in 24 to 36 months, perhaps longer. But in the interim, enterprises must operate in the world as it is, not as it was forecast.

There are two durable strategies in such an environment.

First, architect for portability. Systems that run only on proprietary hardware or in a single cloud will inherit the pricing volatility of that substrate. Systems that abstract hardware and operate across edge, core, and multi-cloud environments preserve negotiating leverage.

Second, prioritize efficiency over raw performance. When components are scarce, software intelligence, predictive caching, compression, and unified namespace design create economic resilience.

Periods of supply shock reward companies that invested in architectural optionality before it was fashionable.

The AI boom has created extraordinary opportunity. It has also exposed fragility in supply chains many assumed were infinite. The hyperscalers are playing a long game, and they are playing it well. But enterprises are not without agency.

The winners over the next five years will not simply be those who buy the most hardware. They will be those who design systems that remain rational when hardware markets are not.